Federated Alternate Training (FAT): Leveraging Unannotated Data Silos in Federated Segmentation for Medical Imaging

Overview of FAT

Overview of FATAbstract

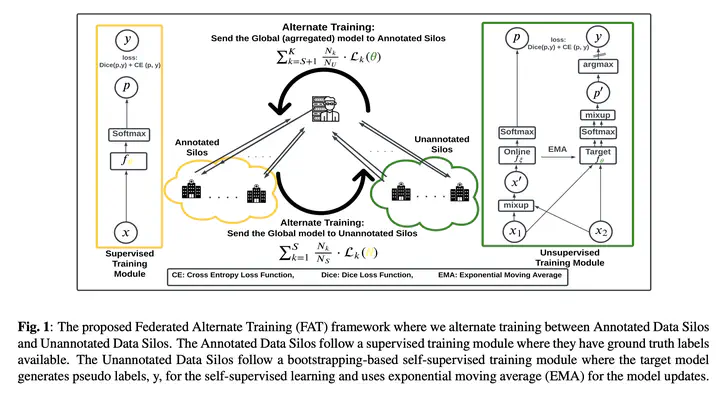

Federated Learning (FL) aims to train a machine learning (ML) model in a distributed fashion to strengthen data privacy with limited data migration costs. It is a distributed learning framework naturally suitable for privacy-sensitive medical imaging datasets. However, most current FL-based medical imaging works assume silos have ground truth labels for training. In practice, label acquisition in the medical field is challenging as it often requires extensive labor and time costs. To address this challenge and leverage the unannotated data silos to improve modeling, we propose an alternate training-based framework, Federated Alternate Training (FAT), that alters training between annotated data silos and unannotated data silos. Annotated data silos exploit annotations to learn a reasonable global segmentation model. Meanwhile, unannotated data silos use the global segmentation model as a target model to generate pseudo labels for self-supervised learning. We evaluate the performance of the proposed framework on two naturally partitioned Federated datasets, KiTS19 and FeTS2021, and show its promising performance.